Abstract

This blog explores how Claude AI is transforming productivity by enabling teams to automate repetitive tasks, streamline workflows, and improve accuracy across various operations. By integrating AI into everyday processes, organizations can accelerate execution, reduce manual effort, and make faster, data-driven decisions.

From Chadura Tech’s perspective, the real value of AI lies in augmenting human capabilities, not replacing them. While Claude AI significantly enhances efficiency and innovation, the blog also emphasizes the importance of human oversight to prevent over-reliance and ensure consistent, reliable outcomes in real-world applications.

Claude AI:

There is a particular sensation that comes with talking to Claude for the first time. Not the novelty of a coherent AI reply we are well past that. It is something subtler: the feeling of being genuinely heard. Of having your actual question answered, rather than a sanitised version of it. Of encountering a system that seems, against expectation, to care about getting things right for the right reasons.

That impression is deliberate. It is the product of years of research at Anthropic the San Francisco AI safety company behind Claude — into a deceptively hard problem: how do you build a powerful AI that isn't just capable, but actually trustworthy?

The answer they arrived at, embodied in the current Claude 4.6 model family, is one of the most consequential experiments in contemporary artificial intelligence.

Born from a Safety-First Philosophy

To understand Claude, you need to understand Anthropic. The company was founded in 2021 by Dario Amodei, Daniela Amodei, and colleagues who had previously worked at OpenAI. Their departure was driven by a conviction that the field was moving too fast with insufficient attention to what happens when things go wrong. That concern has only sharpened as the technology has advanced.

Anthropic describes itself, unapologetically, as an AI safety company. That's not marketing language. It shapes every decision from research agenda to product design. The company publishes substantial work on AI alignment, interpretability, and the challenge of building systems that reliably do what humans actually want — not merely what they literally say.

Claude emerged from this environment. Its training involves a technique called Constitutional AI (CAI), developed at Anthropic, which teaches the model to evaluate its own outputs against a set of principles, rather than relying solely on human approval signals for every decision. The result is a model that has internalised certain values — honesty, intellectual humility, care for the user's real wellbeing — rather than simply pattern-matching to what human reviewers historically liked.

"The goal was never just to build an AI that sounds good. The goal was to build one that actually is good — and that turns out to be a much harder problem than it first appears."

This distinction — between sounding trustworthy and being trustworthy — runs through every aspect of how Claude is designed. It surfaces in the way Claude handles uncertainty, in the texture of its refusals, and in the way it engages with genuinely difficult questions. Claude will tell you when it doesn't know something. It will push back when it disagrees. It will acknowledge the limits of its own reasoning in ways that feel candid rather than scripted.

What Claude Can Actually Do

Before exploring the philosophy further, the practical picture deserves attention — because for all the emphasis on values, Claude is also simply very good at a wide range of tasks.

In writing and communication, Claude produces clear, well-structured prose across an enormous range of styles and contexts. It can draft formal legal correspondence and a children's story in the same session, adapting its register with a fluency that reflects genuine understanding of how language works in different contexts. Writers use it as a drafting partner and developmental editor; businesses use it for documentation, marketing copy, and internal communications.

Its analytical capabilities are equally strong. Present Claude with a dense academic paper, a contract full of legalese, or a financial report, and it will extract key points, identify tensions, and surface implications with thoroughness that would take a human analyst considerably longer. It is particularly good at presenting the strongest version of competing arguments — genuinely useful when navigating complex decisions rather than simply looking for confirmation.

The developer community has been among Claude's most enthusiastic adopters. Claude writes, reviews, and debugs code across dozens of programming languages, and can explain a block of code to a non-programmer and discuss architectural trade-offs with a senior engineer in the same conversation. Claude Code — a command-line tool extending Claude's capabilities into an agentic coding environment — has attracted particular attention for handling extended, multi-step engineering tasks with a degree of reliability that earlier tools didn't approach.

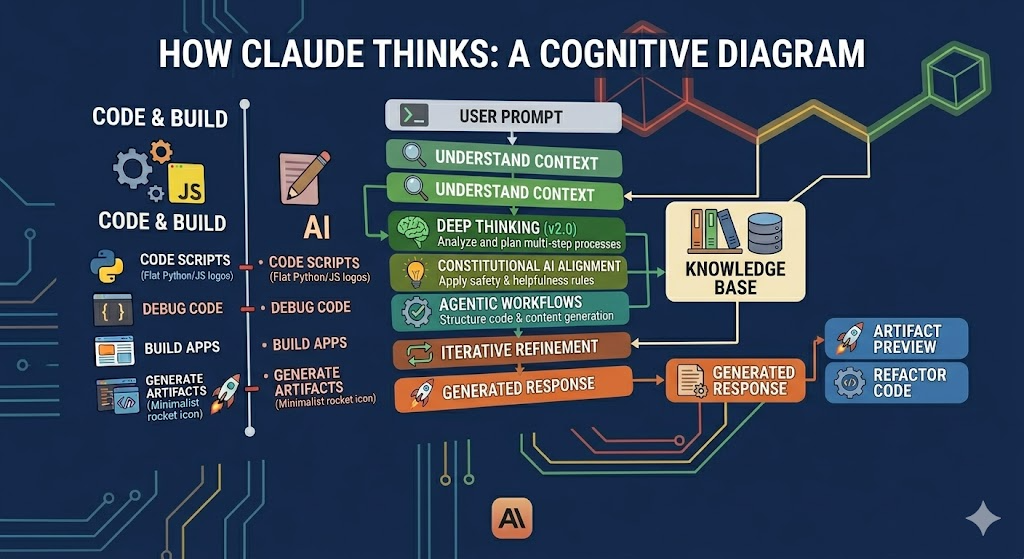

How Claude Thinks

Key Workflow Sections:

The Input Stage: It begins with a User Prompt, which triggers the "Understand Context" phase, pulling from a broad Knowledge Base (represented by books and data icons).

The Thinking Engine: The core vertical stack shows Claude’s internal processing:

Deep Thinking (v2.0): Analyzing and planning multi-step logic.

Constitutional AI Alignment: Applying safety and ethical rules.

Agentic Workflows: Structuring the actual generation of code or content.

The Output Stage: After Iterative Refinement, it produces a Generated Response, which can manifest as an Artifact Preview (interactive UI/code) or Refactored Code.

Specialized Capabilities: The left sidebar highlights specific strengths in Code & Build (Python, JS, React), Debugging, and App Development.

Claude is a large language model — a neural network trained on enormous quantities of text to predict and generate language. That description is accurate as far as it goes, but it is a little like describing a concert pianist as someone who moves their fingers on keys. Technically true; somewhat missing the point.

What distinguishes Claude at the level of training is not the underlying architecture — transformer-based models are now widely used — but what it was trained to optimise for. Constitutional AI adds a layer beyond standard reinforcement learning from human feedback: the model is trained to critique and revise its own outputs against written principles. This creates something closer to an internalised value system rather than a lookup table of approved responses.

Modern Claude models also have very large context windows, allowing them to work with lengthy documents, extended conversations, and complex multi-part tasks without losing the thread. This is practically important for the kinds of deep-work tasks where Claude earns its keep: you can provide an entire codebase, a long research report, or a sustained creative project, and it maintains coherence across the whole.

The Current Model Family

Opus 4.6

Most capable

Maximum capability for complex, long-horizon tasks. Deep research, advanced coding, and sophisticated multi-step reasoning.

Sonnet 4.6

Everyday default

An excellent balance of quality and efficiency. The standard choice for most conversational, analytical, and writing tasks.

Haiku 4.5

Fast and lightweight

Optimised for high-volume, lower-complexity tasks and API applications where speed is the priority.

The Values Question

Every AI assistant has guardrails. What distinguishes Claude is the sophistication and philosophical coherence of the reasoning behind its limits — and the candour with which those limits are communicated.

Claude doesn't simply refuse certain categories of request and move on. When it declines to do something, it typically explains why, in terms that reflect genuine ethical reasoning rather than a canned disclaimer. When a request sits in a grey area, it says so explicitly, acknowledging the tension between competing values rather than pretending the decision is straightforward.

"Being unhelpful is itself a harm. Refusing to give a clear answer when a clear answer exists is a disservice to the person asking."

This matters because excessive caution is a real and underappreciated failure mode in AI assistants. Systems that refuse too readily, that add disclaimers to everything, that treat users as incapable of handling real information — these systems fail people even as they protect themselves from criticism. Claude's designers have been explicit about this tension, and the result is an AI that will discuss difficult topics while declining specific requests that would constitute genuine harm. The line isn't always easy to draw, and Claude will sometimes get it wrong in both directions. But the attempt to draw it thoughtfully rather than reflexively is itself meaningful.

Claude in Practice

For knowledge workers — lawyers, academics, journalists, consultants — Claude has become a research and drafting tool that compresses hours into minutes. A lawyer reviewing a contract can have Claude flag unusual clauses, explain unfamiliar terms, and draft revision language, freeing them to focus on the analysis that actually requires their judgment. A researcher can use it to synthesise a field's literature, identify gaps, and help structure an argument. The leverage is real and significant.

In education, Claude's willingness to explain ideas from first principles, to meet a learner where they are, and to answer follow-up questions without impatience makes it an effective tutor. It is also notably resistant to doing homework in a way that bypasses learning — it will often try to guide rather than simply deliver an answer, particularly when a question seems designed to extract rather than understand.

Creative professionals have found in Claude a collaborator that is both capable and unusually good at understanding intent. It can sustain a consistent character across a long narrative, write in a wide range of voices, and contribute ideas that go beyond competent execution into genuinely surprising territory. Its responses to interesting creative challenges have a quality of engagement that distinguishes them from the merely adequate.

A Different Bet on AI

The dominant narrative of AI capability has, for years, been a story about scale: more compute, more data, larger models. This story is largely correct — scaling has driven most of the major advances of the last decade. But Anthropic has made an additional bet: that capability without aligned values is not just dangerous but ultimately limited.

A system that is very capable but unreliable — that sometimes hallucinates, sometimes refuses for unclear reasons, sometimes pursues instructions in ways that are technically correct but miss the point — is not actually as useful as its raw capabilities suggest. Trust matters. Predictability matters. Transparent reasoning about uncertainty matters. These are not soft concerns; they are product features of the first order.

Claude is not always the highest-scoring model on every capability benchmark. But in the extended, ambiguous, real-world tasks where AI assistants actually need to earn their keep, it holds up well — because it is thinking carefully about what you actually need, not just what you literally asked for.

One of Anthropic's most active research programmes involves interpretability — the attempt to understand what is actually happening inside a large language model when it processes information. The practical stakes are high: if you can understand why a model does what it does, you can identify failure modes before they cause harm, verify that the model's values are what you believe them to be, and build the kind of trust that would justify giving AI systems more autonomy over consequential decisions.

What Comes Next

Agentic capabilities — the ability to take sequences of actions, use tools, interact with external services, and operate with greater autonomy over longer time horizons — are increasingly central to where this technology is heading. The stakes are correspondingly higher. A language model that answers questions is one thing. A language model that can browse the web, execute code, send emails, and make decisions in the world is a different category of system entirely.

Anthropic's answer is to extend the same philosophy that shaped Claude's conversational capabilities into the agentic domain: careful, incremental expansion of autonomy; explicit attention to where trust must be established before capability is deployed; and a genuine commitment to keeping humans meaningfully in the loop on consequential decisions. Claude Code represents an early test of this approach, and users consistently note that it is notably good at asking for clarification rather than confidently proceeding in the wrong direction — a small thing, perhaps, but a meaningful signal about the disposition being built.

"The question is not whether AI will be capable. That battle is over. The question is whether it will be trustworthy. And trustworthiness has to be built, not just announced."

Claude is, at bottom, an argument. An argument that safety and capability are not in tension but are, in the long run, complements. An argument that an AI assistant's character matters — not just as a product differentiator, but as a genuine feature of whether the technology is good for the world. An argument that the way to build trust with AI systems is the same as the way you build trust with anything: through consistency, honesty, and demonstrated good judgment over time.

Whether Anthropic's bet will ultimately pay off remains to be seen. But the attempt is serious, the reasoning behind it is coherent, and the product it has produced is genuinely worth paying attention to — not just as a capable AI assistant, but as a proof of concept for a different way of thinking about what AI should be.